What is a Self-Organizing Network (son) ?

In the dynamic realm of networking, where connections between devices and systems are constantly evolving, the concept of self-organizing networks (SONs) has emerged as a revolutionary paradigm. SONs represent a…

AI vs ML vs Statistics – What are the differences

In the realm of data science, three pillars stand prominently: Artificial Intelligence (AI), Machine Learning (ML), and Statistics. Each domain holds a distinct place in shaping our understanding of data…

Nesterov Momentum: Optimized Convergence in Gradient Descent

In the realm of optimization algorithms, the Nesterov Momentum Algorithm stands as a beacon of efficiency and speed, revolutionizing the landscape of gradient-based optimization techniques. Born from the foundational concepts…

Machine Learning Regression Algorithms & Models With Example

Machine learning, a subfield of artificial intelligence, is a powerful approach to extracting insights and making predictions from data. Within machine learning, regression is a fundamental technique that plays a…

Learning Rate Scheduler Explained with an Example in Pytorch

In the ever-evolving landscape of artificial intelligence and machine learning, fine-tuning a model to perfection often hinges on one critical hyperparameter: the learning rate. The learning rate, the magnitude at…

Sklearn Perceptron Learning Algorithm Explained

Sklearn multilayer Perceptron Learning Algorithm Explained : In the ever-evolving landscape of artificial intelligence and machine learning, there are foundational concepts that serve as cornerstones to the understanding of more…

Supervised Learning Types & Methods Explained

Supervised learning is a fundamental and powerful concept in the field of machine learning, where computers are trained to make predictions or decisions based on labeled data. It’s a method…

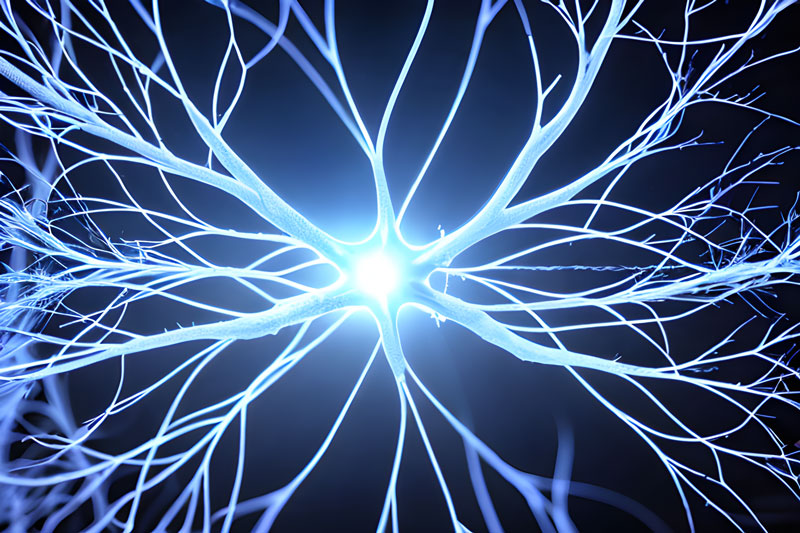

Unlocking the Secrets of the Brain: A Dive into Hebbian Learning

The human brain, with its intricate web of neurons and synapses, has long fascinated scientists and researchers. How does it store memories, learn new information, and adapt to its surroundings?…

Relu Activation Function in Torch & Keras | Code with Explanation

The ReLU (Rectified Linear Unit) activation function is a popular and widely used activation function in neural networks. It is a simple mathematical function that introduces non-linearity into the network,…

CapsNet | Capsule Networks Implementation in Keras & Pytorch

What is CapsNet | Capsule Networks Implementation in Keras or Pytorch : CapsNet, short for “Capsule Network,” is a type of neural network architecture proposed by Geoffrey Hinton and his…